Study finds kids targeted with graphic content hours after joining social media

Online Safety

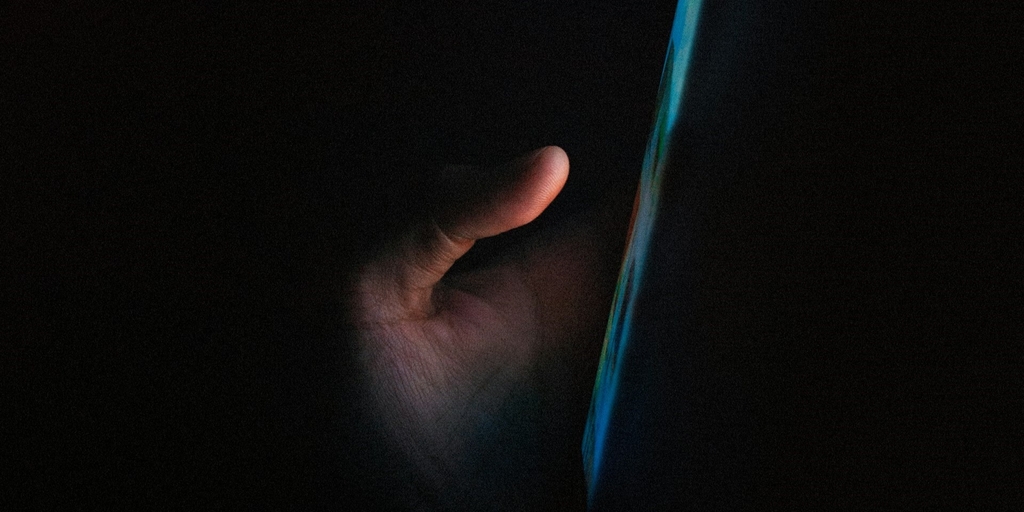

Children are being targeted with graphic content within hours of setting up a social media account, a new report has found.

Research commissioned by the children's safety group 5Rights Foundation demonstrated that young social media users are bombarded with disturbing content, including images of self-harm and pornography.

The report raised concerns about algorithms used by social media companies which suggest new content based on a user’s activity. It found that whilst young users are targeted with age-appropriate material such as adverts for college courses, sexual or self-harm content is also recommended.

It also warned of the potential for body dysmorphia and eating disorders, noting that "a child who clicks on a dieting tip, by the end of the week, is recommended bodies so unachievable that they distort any sense of what a body should look like”.

Commenting on the research, Ian Russell, whose daughter Molly took her own life aged 14 after viewing graphic self-harm and suicide content online, said:

"The priority of the platforms is profit. They are designed to keep people on there as long as possible with scant thought for the safety of the people, particularly young people online, so that's what has to change.

"People's safety has to come first so that they are not led down these rabbit holes, the algorithms don't push ever more harmful content to the people who are using their platform."

From September, new rules will come into force placing a duty on social media companies to provide age-appropriate algorithms or face fines and other punishments.

The UK Government is also expected to introduce online safety legislation to place curbs on social media companies that fail to protect vulnerable users, and tackle ‘harmful’ content.

However, Ministers have come under fire for refusing to enforce measures to compel commercial pornography sites to verify the age of users and remove ‘extreme content’.

A spokesman for CARE said:

“Reports like this show just how unsafe the online world is for our children and young people. Graphic and disturbing content is available to view at the touch of a button, from a very young age. Legislators should consider tougher action on social media platforms which are currently failing to protect users.

“It is also vitally important that the government takes tougher action against pornography websites. To date, Ministers have refused to enforce measures already on the statute book to require age checks for access to commercial porn sites and punish platforms that host extreme content. For the sake of children and women, the government needs to do better.”

Share story

Study finds kids targeted with graphic content hours after joining social media